Integrating OpenStack and Kubernetes creates a unified, powerful platform capable of running virtually any application workload. It's the definitive strategy for running legacy VM-based monoliths alongside modern, containerized microservices on a single, API-driven infrastructure.

This guide provides a technical blueprint for bridging the gap between your existing infrastructure and your cloud-native future.

The Power Duo: Why OpenStack and Kubernetes Work Together

Think of your data center infrastructure as a raw, undeveloped plot of land. Before you can build, you need a system to provision and manage the fundamental utilities and access—the land itself, power, water, and roads.

This is precisely the role of OpenStack.

OpenStack is your Infrastructure as a Service (IaaS) platform, designed to programmatically provision and manage foundational infrastructure components:

- Compute (Nova): Provisions and manages the lifecycle of virtual machines (VMs) or bare metal servers (Ironic). These are the foundational compute blocks.

- Networking (Neutron): Defines and manages the virtual networks, routers, subnets, and security groups that connect your resources.

- Storage (Cinder/Swift): Provides persistent block storage (Cinder) for VMs and scalable object storage (Swift) for unstructured data.

OpenStack excels at abstracting hardware, giving you a robust, API-driven foundation to build upon.

Now, imagine you need to build a complex, modular city on that provisioned land. You wouldn't place every prefabricated unit by hand. You'd deploy an automated logistics manager to handle the placement, scaling, healing, and lifecycle of thousands of units.

That expert is Kubernetes.

Kubernetes is the premier Container as a Service (CaaS) orchestrator. It completely automates the deployment, scaling, and operational management of containerized applications. It ensures your services are resilient, self-healing, and can scale dynamically based on demand, all driven by declarative configuration.

Unifying Infrastructure and Applications

Individually, OpenStack and Kubernetes are powerful but solve different problems. OpenStack manages the underlying infrastructure, while Kubernetes manages the applications running on it. When you combine OpenStack and Kubernetes, you achieve a seamless, end-to-end, software-defined data center.

This partnership is a game-changer for platform engineering. It eliminates resource silos by enabling you to run both legacy monoliths on VMs and new microservices in containers on a single, unified platform. The operational consistency is a massive strategic advantage.

The real magic happens when you treat OpenStack as the resilient IaaS layer that provides API-addressable resources, and Kubernetes as the agile CaaS layer that consumes those resources to run applications with declarative efficiency.

To make this distinction crystal clear, here’s a breakdown of their technical roles.

OpenStack vs Kubernetes Core Roles

| Aspect | OpenStack: The Infrastructure Provisioner | Kubernetes: The Application Orchestrator |

|---|---|---|

| Primary Goal | Provides and manages virtualized or physical infrastructure resources (compute, storage, network) via an API. | Deploys, scales, and manages containerized applications on top of infrastructure using a declarative model. |

| Core Unit | Virtual Machines (VMs) or Bare Metal Servers (Ironic Nodes) | Containers (packaged in Pods) |

| Analogy | A real estate developer that prepares plots of land with utilities via an automated API. | A city planner that uses declarative blueprints (YAML manifests) to manage buildings and their lifecycle. |

| Manages | Hardware abstraction, resource pools, multi-tenancy at the IaaS level (projects, users, quotas). | Application lifecycle, service discovery, load balancing, self-healing, configuration, and secrets. |

| Typical User | Infrastructure engineers, cloud administrators, SREs. | Application developers, DevOps engineers, SREs. |

In short, OpenStack provides Kubernetes with a robust and elastic infrastructure foundation, and Kubernetes makes that foundation incredibly productive for running modern applications.

A Proven Strategy for Modern Clouds

Pairing these two isn't a niche concept; it's a proven strategy adopted by major enterprises. The OpenStack Foundation's user surveys consistently show that a significant majority of OpenStack deployments also run Kubernetes. This isn't a trend—it's the standard for building private and hybrid clouds.

You can dig into the growth of Kubernetes within OpenStack environments to see the historical context. For CTOs and platform engineers, this means you can leverage OpenStack's robust features for provisioning VMs and even bare metal servers, while Kubernetes handles container orchestration on top.

This gives you a flexible, future-proof foundation ready for any workload.

Choosing Your Integration Architecture

Deciding how to architect the integration of OpenStack and Kubernetes is a critical engineering decision. It dictates performance, operational overhead, and scalability. Your choice of resource management, failure domains, and scaling strategy is determined by the architectural pattern you select.

We'll examine three core patterns, each with distinct technical trade-offs. What works for a high-performance computing environment might be overkill and overly complex for a general-purpose application platform.

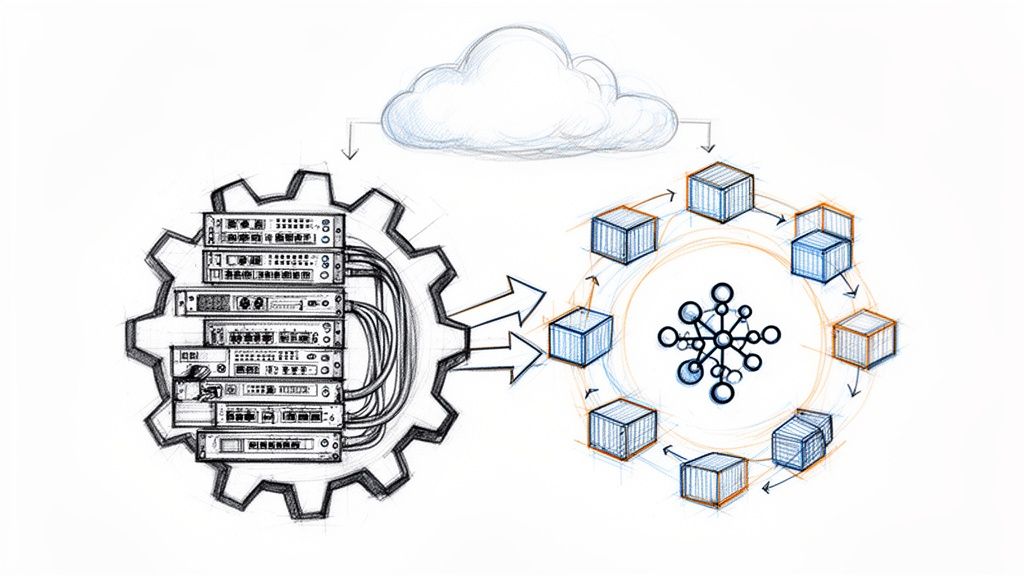

This diagram shows the classic relationship: OpenStack provides the IaaS layer, and Kubernetes runs on top, orchestrating applications.

It's a simple but powerful concept. OpenStack provides fundamental compute, storage, and networking resources, and Kubernetes consumes them to run containerized workloads declaratively.

Pattern 1: Kubernetes on OpenStack VMs

The most common and well-supported pattern is running Kubernetes clusters on virtual machines provisioned by OpenStack Nova. In this model, OpenStack acts as your private IaaS, serving up compute, storage, and networking resources just as a public cloud provider would.

This model is popular because it leverages the core strengths of both platforms with minimal custom engineering and has a mature ecosystem of tools.

- How it works: You use OpenStack APIs or the Horizon dashboard to spin up a set of VMs (e.g., three for the control plane, several for worker nodes). Then, you use a tool like

kubeadmor a cluster-api provider to deploy a Kubernetes cluster onto those VMs. - Storage Integration (CSI): The OpenStack Cloud Provider, specifically its Container Storage Interface (CSI) driver, enables Kubernetes to interact directly with OpenStack Cinder. When a user creates a

PersistentVolumeClaim(PVC), the CSI driver calls the Cinder API to dynamically provision a block storage volume and attaches it to the correct worker node VM. - Networking Integration (CPI): Similarly, the cloud-provider-openstack component handles network services. When a developer creates a

LoadBalancerservice in Kubernetes, it triggers a call to OpenStack Octavia to provision a load balancer instance, which then directs external traffic to the appropriate service pods.

This approach provides a clean separation of concerns. The infrastructure team manages the OpenStack cloud and its service-level agreements (SLAs), while application and platform teams consume these resources to manage Kubernetes clusters. It's the most pragmatic starting point for most organizations.

Pattern 2: Kubernetes on Bare Metal with Ironic

For workloads demanding maximum performance—such as high-performance computing (HPC), intensive AI/ML training, or high-throughput databases—the virtualization overhead of a hypervisor is an unacceptable performance tax. Running Kubernetes directly on bare metal gives containers raw, unimpeded access to hardware resources.

This is the primary use case for OpenStack Ironic. Ironic is the OpenStack bare metal provisioning service, enabling you to manage physical servers with the same API-driven automation as VMs. You get the raw power of bare metal with the operational efficiency of the cloud. If this fits your needs, our deep dive on Kubernetes on bare metal provides further technical detail.

Choosing your infrastructure model is a critical decision. Understanding the nuances between a private cloud versus an on-premise setup is crucial for aligning your technology strategy with business and financial objectives.

Pattern 3: Containerizing OpenStack on Kubernetes

This advanced pattern inverts the traditional architecture: you run the OpenStack control plane services themselves as containerized applications orchestrated by Kubernetes. Instead of OpenStack managing the infrastructure for Kubernetes, Kubernetes manages the lifecycle of the OpenStack services.

This is the direction modern OpenStack deployments are heading, championed by projects like Kolla-Kubernetes and OpenStack-Helm. Core OpenStack services—Nova, Neutron, Keystone, Cinder, etc.—are packaged as containers and deployed as stateless applications managed by Kubernetes controllers (like Deployments and StatefulSets). The benefits are significant: automated deployments, seamless rolling updates, and a self-healing control plane.

This model became viable as Kubernetes matured. Features like RBAC (v1.6, March 2017), Custom Resource Definitions (CRDs) (v1.7, June 2017), and the GA of the Container Storage Interface (CSI) in v1.13 (December 2018) provided the necessary building blocks for this robust, enterprise-ready architecture. For any DevOps engineer, a Kubernetes-native, self-healing OpenStack control plane is a massive leap forward from legacy high-availability configurations.

A Technical Guide to Deployment and Integration

Architectural diagrams are one thing; implementing a production-ready system is another. This is where we move from theory to practice, focusing on the technical specifics of building a robust and operable platform.

Our goal is a production-grade environment. The deployment choices made here will directly impact day-to-day operations, performance, and scalability.

Let's dive into the technical details of deployment methods and the critical integration points that make running Kubernetes on OpenStack a powerful combination. This is your field manual for turning IaaS into a dynamic application platform.

Choosing Your Deployment Tool

Your first major decision is how to provision Kubernetes clusters on OpenStack. This is a classic engineering trade-off: managed automation versus granular control.

OpenStack Magnum is the "cluster-as-a-service" API for OpenStack. It's a certified project that automates the entire lifecycle of Kubernetes clusters.

With Magnum, you define a cluster template (a declarative spec for your cluster), specifying the Kubernetes version, node count, VM flavor, and other parameters. Magnum's conductors then orchestrate the creation of all necessary OpenStack resources (VMs via Nova, networks via Neutron, security groups, etc.) and install Kubernetes using tools like

kubeadmunder the hood.

Alternatively, a manual deployment using tools like kubeadm or Cluster API Provider for OpenStack (CAPO) offers maximum control. This path is for teams that require deep customization or want to manage the bootstrap process directly. You provision the VMs using Nova, then execute kubeadm init on a control plane node and kubeadm join on worker nodes.

Core Integration With the OpenStack Cloud Provider

Regardless of the deployment method, the OpenStack Cloud Provider is the most critical integration component. It's the bridge that allows the Kubernetes control plane to communicate with and control OpenStack resources. This makes the cluster "cloud-aware," enabling it to leverage OpenStack as its native infrastructure provider.

The Cloud Provider for OpenStack unlocks key dynamic features:

- Dynamic Load Balancers: A developer defines a Kubernetes

Serviceof typeLoadBalancerin a YAML manifest. The cloud provider's controller detects this object and makes an API call to OpenStack Octavia to provision a load balancer. Octavia then configures the load balancer to distribute traffic to the service's endpoint IPs. - Dynamic Persistent Storage: An application requires stateful storage, so a developer creates a

PersistentVolumeClaim(PVC). The OpenStack CSI driver (part of the cloud provider) detects the PVC and calls the OpenStack Cinder API to create a block storage volume. The driver then orchestrates the attachment of that volume to the correct node VM and makes it available to the pod.

This integration abstracts the underlying infrastructure, allowing developers to use standard, declarative Kubernetes APIs to provision resources on demand.

Advanced Networking With Kuryr

Most deployments use a standard Kubernetes CNI plugin like Calico or Flannel, which creates a virtual overlay network for pod-to-pod communication. This is simple and effective but introduces an encapsulation layer (e.g., VXLAN or IPIP) that adds minor performance overhead.

For performance-critical applications, Kuryr provides an alternative. Kuryr is a CNI plugin that directly integrates Kubernetes networking with OpenStack Neutron, eliminating the overlay.

Instead of a separate pod network, Kuryr gives each Kubernetes pod its own port on the underlying Neutron network. This makes pods first-class citizens in the OpenStack network fabric. The primary benefit is near-native network performance and the ability to apply Neutron security groups directly to pods. The trade-off is increased consumption of IP addresses and tighter coupling with the underlying network architecture.

To help navigate these choices, this comparison breaks down the technical trade-offs.

Technical Comparison of Deployment Methods

This table breaks down the key technical trade-offs engineers face when deciding how to get Kubernetes running on OpenStack.

| Deployment Method | Best For | Management Complexity | Flexibility & Control | Performance |

|---|---|---|---|---|

| OpenStack Magnum | Teams seeking a turnkey, "as-a-service" experience with simplified lifecycle management. | Low | Moderate (Limited to template options) | Standard |

Manual kubeadm |

Teams needing deep customization, running non-standard configurations, or wanting full control. | High | High (Full control over every component) | Standard |

| Kuryr Integration | Performance-critical workloads where network latency and throughput are paramount. | High | Moderate (Tightly coupled with Neutron) | High |

Ultimately, the right choice depends on your team's expertise, your application's performance requirements, and the level of control you require over the stack.

Mastering Day 2 Operations and Management

Provisioning your OpenStack and Kubernetes platform is just Day 1. The real challenge—and where value is created or lost—is in Day 2 operations: monitoring, maintenance, automation, and evolution of the system.

This is the core domain of Site Reliability Engineering (SRE) and platform teams.

An unmonitored platform is a liability. The first priority for Day 2 is to build a unified observability stack that provides deep visibility into both the OpenStack infrastructure and the Kubernetes workloads running on it. You need to be able to correlate application-level issues with underlying infrastructure performance.

Building Your Unified Observability Stack

A proven and powerful stack for this purpose combines Prometheus for metrics, the EFK stack for logging, and Grafana for visualization.

- Prometheus for Metrics: Prometheus is the de facto standard for time-series metrics in cloud-native environments. You deploy exporters to scrape metrics from OpenStack services (e.g., Nova, Neutron, Cinder exporters) and Kubernetes components (kubelet, API server, cAdvisor). This provides a rich dataset on everything from pod CPU utilization to Nova API latency.

- EFK for Logging: The EFK stack—Elasticsearch, Fluentd, and Kibana—provides robust, centralized logging. Fluentd, deployed as a

DaemonSetin Kubernetes, acts as a log aggregator, collecting logs from container stdout/stderr and OpenStack service log files. Elasticsearch provides powerful indexing and search capabilities, while Kibana offers a UI for querying and visualizing log data. - Grafana for Visualization: Grafana is the single pane of glass. It connects to both Prometheus and Elasticsearch as data sources, allowing you to build comprehensive dashboards that correlate metrics (e.g., a spike in API latency) with corresponding logs (e.g., error messages), giving you a holistic view of system health.

For a deeper technical guide, see our article on monitoring Kubernetes with Prometheus. The principles are directly applicable to the full stack.

Automating Deployments with CI/CD Pipelines

With observability in place, the next step is automating application delivery. A robust CI/CD (Continuous Integration/Continuous Deployment) pipeline is essential for developer productivity and platform stability.

The goal is a fully automated, auditable path from code commit to production deployment.

The core principle is simple: humans write code, and machines handle the rest. This minimizes manual error, increases deployment velocity, and allows engineers to focus on building features, not performing manual deployments.

Tools like GitLab CI for CI and ArgoCD for CD (GitOps) are an excellent combination. A typical pipeline for a containerized application would be:

- Code Commit: A developer pushes code to a feature branch in a Git repository.

- CI Pipeline Trigger: A webhook triggers a CI job that builds a new container image and runs automated tests.

- Security Scan: The CI pipeline scans the container image for known vulnerabilities (CVEs) using a tool like Trivy.

- Push to Registry: On success, the validated image is pushed to a container registry and tagged.

- GitOps Deployment: The developer updates a deployment manifest in a separate Git repository to point to the new image tag. ArgoCD, which monitors this repository, detects the change and automatically synchronizes the state of the Kubernetes cluster to match the new manifest, triggering a rolling deployment.

Adopting Essential SRE Practices

To achieve enterprise-grade reliability, you must adopt an SRE mindset, moving from reactive firefighting to a proactive, data-driven approach.

- Define SLOs and SLIs: You cannot manage what you do not measure. Define Service Level Objectives (SLOs) based on specific Service Level Indicators (SLIs). For example, an SLI could be

API server request latency (99th percentile), with an SLO of<500ms. This provides a concrete, measurable target for reliability. - Automate Failure Recovery: Leverage the self-healing capabilities of your platform. Kubernetes liveness/readiness probes, pod auto-restarts, and node auto-scaling are fundamental. OpenStack services can be configured for high availability. Codify automated responses to common failure modes to minimize mean time to recovery (MTTR).

- Plan and Test Upgrades: Upgrading OpenStack or Kubernetes is a high-stakes operation. Develop a clear, tested, and automated procedure for performing rolling updates with zero downtime. Always have a well-rehearsed rollback plan.

Implementing Security and Multi-Tenancy

When you combine OpenStack and Kubernetes, you create a shared multi-tenant platform. In this context, security and tenant isolation are not optional features; they are the foundational requirements for stability and trust. Failure to enforce strict isolation boundaries means you don't have a platform, you have a security incident waiting to happen.

Even back in 2017, The New Stack's Kubernetes User Experience survey showed that nearly 80% of organizations with wide container usage were already in production. Today, failing to secure these production platforms is a non-starter.

Effective multi-tenancy requires creating strong, logical boundaries at every layer of the stack. A tenant's resource consumption, network traffic, or security vulnerability must not impact any other tenant. This is achieved by layering controls at the OpenStack (IaaS) and Kubernetes (CaaS) levels.

Unifying Identity With Keystone and RBAC

True multi-tenancy begins with a unified identity and access management (IAM) system. You must establish a single source of truth for who can do what. This is achieved by integrating OpenStack Keystone with Kubernetes’ Role-Based Access Control (RBAC).

Keystone serves as the central identity provider for the entire cloud. Users, groups, and projects (tenants) are defined here. By configuring the Kubernetes API server to use Keystone as an OpenID Connect (OIDC) or webhook authenticator, you create a unified authentication mechanism.

In practice, a user authenticates against Keystone to obtain a token. This token is then presented to the Kubernetes API server, which validates it with Keystone. This eliminates credential sprawl and establishes a single point of control for authentication.

Once authenticated, Kubernetes RBAC handles authorization. You define Roles (namespace-scoped permissions) and ClusterRoles (cluster-scoped permissions) to specify granular permissions—e.g., create pods, list secrets. You then use RoleBindings and ClusterRoleBindings to associate these permissions with the users or groups authenticated via Keystone. The result is a seamless, end-to-end IAM framework.

Layering Network Isolation With Neutron and NetworkPolicies

Next, you must isolate tenant network traffic. This requires a two-layer approach, leveraging the strengths of both OpenStack and Kubernetes.

Infrastructure-Level Isolation with Neutron: OpenStack Neutron provides the first and strongest layer of isolation. By assigning each tenant (OpenStack project) its own dedicated virtual network, you create hard network segregation at the IaaS level. Traffic from Tenant A's network has no route to Tenant B's network by default.

Application-Level Security with Kubernetes NetworkPolicies: Within a single tenant's network, you need finer-grained control. Kubernetes

NetworkPoliciesact as a stateful firewall for pods. You write declarative policies to control ingress and egress traffic at the pod level based on labels. For example, you can enforce a policy that only pods with the labelapp=frontendcan communicate with pods labeledapp=backendon port3306.

This layered approach provides defense-in-depth. Neutron enforces coarse-grained isolation between tenants, while NetworkPolicies enforce fine-grained micro-segmentation within a tenant's environment.

Securing Secrets and Workloads

A secure platform also requires protecting sensitive data and enforcing runtime security for workloads.

Secrets Management: Never store secrets (API keys, passwords, certificates) in plain text in Git or container images. Use a dedicated secrets management tool like HashiCorp Vault or OpenStack Barbican. These tools provide secure storage, dynamic secret generation, access control, and audit logging. They integrate with Kubernetes via mechanisms like the CSI Secrets Store driver, allowing pods to mount secrets securely at runtime.

Pod Security Standards: Kubernetes offers built-in Pod Security Standards (PSS) with three profiles:

Privileged,Baseline, andRestricted. Enforce theRestrictedpolicy as the default for all tenant namespaces. This is a critical security best practice that prevents pods from running as root, gaining host privileges, or accessing sensitive host paths.Automated Image Scanning: Your CI/CD pipeline must act as a security gate. Integrate a vulnerability scanner like Trivy or Clair to automatically scan every container image for known vulnerabilities (CVEs) during the build process. Fail the build if critical vulnerabilities are found, preventing insecure images from ever reaching your registry.

For a deeper technical treatment of these topics, consult our guide on essential Kubernetes security best practices.

By systematically implementing these technical controls, you engineer your OpenStack and Kubernetes platform into a secure, isolated, and truly multi-tenant environment fit for production workloads.

Knowing when to call in a DevOps expert can be tricky. You've built this powerful platform combining OpenStack and Kubernetes, and it has massive potential. But let's be real—the complexity is no joke. If you're not careful, that competitive edge can quickly turn into an operational bottleneck that grinds everything to a halt.

So, what are the red flags? One of the biggest signs is when your platform's complexity starts to actively slow down your developers. If your engineers are spending more time fighting infrastructure fires than shipping code, you have a problem. When provisioning a simple resource turns into a multi-day saga of manual tickets and approvals, your platform isn't an accelerator anymore. It's an anchor.

When Your Platform Hits a Scaling Wall

Another signal, and it's a big one, is when reliability and scaling issues become a direct threat to the business. Are you seeing frequent outages? Is performance tanking during peak traffic? Maybe your clusters just won't scale out when you desperately need them to.

These aren't just surface-level bugs. They usually point to deeper architectural flaws that need a specialist's eye. An expert can spot the root cause, whether it's a misconfigured Neutron setup causing network gridlock or a clunky Cinder backend that’s killing your persistent volume performance.

When your team is stretched thin, a DevOps partner brings more than just an extra pair of hands. They've seen this movie before—dozens of times. They bring battle-tested strategies to build a resilient platform that actually supports your long-term goals, not just patch the immediate problem.

Accelerating Success with Specialized Expertise

It’s also time to get help when your team hits a wall with advanced features. Maybe you need to implement complex multi-tenancy with Keystone and RBAC, fully automate your CI/CD pipelines, or build out a unified observability stack that makes sense. Getting these wrong can create more problems than they solve.

And when you do bring in an expert, a solid approach to security for DevOps is non-negotiable. It has to be baked into every part of your OpenStack and Kubernetes stack from day one.

A specialized DevOps consultant can jump in and provide critical help where you need it most:

- Strategic Architecture: They’ll design a platform that’s not just stable today, but is built to handle your specific workloads as you grow.

- Best Practice Implementation: They know the proven patterns for security, monitoring, and automation, helping you sidestep those common, costly mistakes.

- Skill Augmentation: A good partner works with your team, not just for them. They'll transfer knowledge and level up your own engineers so they can confidently run the show long-term.

Working with an expert like OpsMoon transforms your integrated OpenStack and Kubernetes infrastructure from a source of friction into the powerful, reliable foundation you need for real growth.

Frequently Asked Questions

When you start digging into the combination of OpenStack and Kubernetes, a lot of the same questions tend to pop up. Let's tackle some of the most common ones I hear from engineers and team leads who are deep in the weeds with this stuff.

Can I Run Virtual Machines and Containers on the Same Kubernetes Cluster?

Yes. The project KubeVirt is a Kubernetes addon that allows you to declare and manage virtual machines using the same Kubernetes API and kubectl tooling used for containers. KubeVirt runs VMs inside special pods, effectively treating them as another workload type.

This is a powerful strategy for migrating legacy applications that are still dependent on a full VM operating system. It allows you to unify your orchestration under a single control plane—Kubernetes—for both modern containerized workloads and traditional VM-based ones, simplifying operations significantly.

Is OpenStack Still Relevant in a Kubernetes World?

Absolutely, particularly for organizations building private or hybrid clouds. OpenStack provides the robust, multi-tenant IaaS layer that Kubernetes needs to operate effectively outside of a public cloud. It excels at managing heterogeneous hardware and, with Ironic, can provision bare metal servers on demand for Kubernetes clusters that require maximum performance.

For any organization that needs sovereign control over its infrastructure, OpenStack provides the enterprise-grade services that allow Kubernetes to shine. It exposes powerful, API-driven networking (Neutron) and block storage (Cinder) directly to Kubernetes, making it the ideal foundational layer.

What Is the Biggest Challenge of Integrating OpenStack and Kubernetes?

From a technical standpoint, the most common and difficult challenge is networking complexity. Achieving seamless, high-performance, and secure networking between Kubernetes pods and the underlying OpenStack network is where many implementations falter.

This requires deep expertise in both Kubernetes CNI and OpenStack Neutron. While tools like Kuryr are designed to bridge this gap, a misconfiguration in routing, security groups, or IP address management can lead to severe performance bottlenecks or security vulnerabilities. This networking complexity is a primary driver for seeking expert assistance to ensure the architecture is sound from day one.

Managing the friction between OpenStack and Kubernetes isn't a side project; it demands specialized knowledge. OpsMoon connects you with top-tier DevOps experts who have been there and done that. They can help architect, secure, and operate your platform, turning all that complexity into a real competitive advantage. Start your free work planning session with OpsMoon and build a clear roadmap for your platform's success.

Leave a Reply