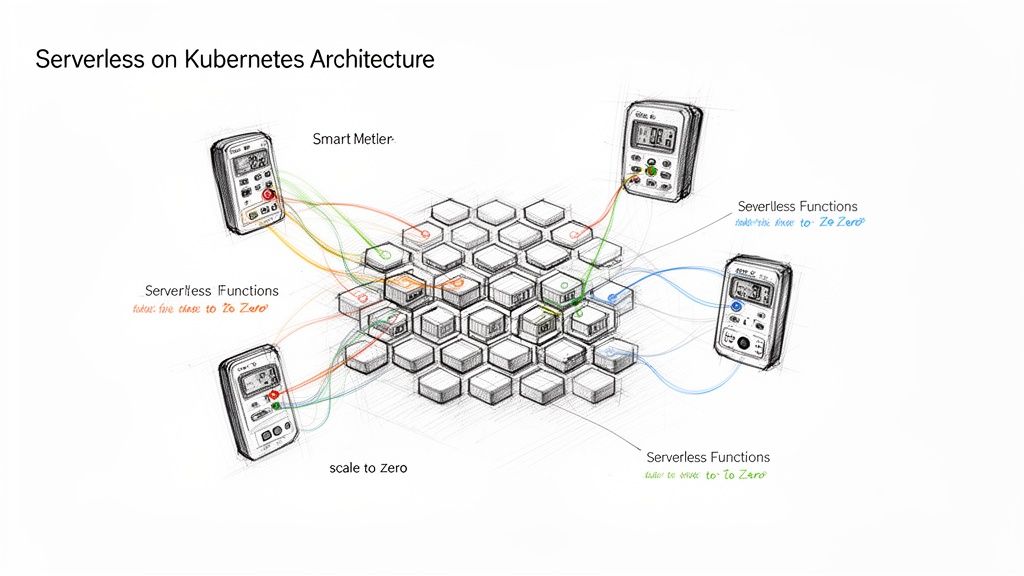

Running serverless on Kubernetes sounds like a contradiction. Serverless architecture abstracts away server management, while Kubernetes is a premier container orchestration platform—which is fundamentally about managing server resources.

However, combining these technologies creates a powerful hybrid. You gain the event-driven, scale-to-zero execution model that developers value, but you run it on your own infrastructure. This eliminates vendor lock-in and grants you complete control over your environment, from networking to security.

Bridging Serverless Agility with Kubernetes Control

Consider Kubernetes as your private, dedicated compute grid. It's robust, reliable, and entirely under your control. The serverless frameworks you deploy on top function as intelligent resource managers for each application.

These frameworks ensure that an application, whether it's a microservice or a function, consumes only the precise compute resources it needs, precisely when it needs them. When an application is idle—receiving no traffic or events—its resource consumption scales down to zero. This is the core principle of running serverless workloads on your Kubernetes clusters.

This approach provides the developer-centric experience of serverless FaaS platforms without tying you to a specific cloud provider's ecosystem. You get the operational benefits of serverless, but with your platform engineering team in full command.

Why Combine Serverless and Kubernetes?

Merging these two cloud-native technologies offers tangible engineering and business advantages.

- Enhanced Developer Velocity: Engineers can focus exclusively on writing and shipping business logic. They deploy a function or container, and the platform handles the underlying scaling, networking, and server provisioning automatically.

- Complete Infrastructure Governance: Your platform and SRE teams retain full control over the cluster's configuration. This allows you to enforce security policies using

NetworkPolicyandPodSecurityAdmission, define network routing viaIngressor Gateway API, and standardize your observability stack (e.g., Prometheus, Grafana, Jaeger). - Multi-Cloud and Hybrid Portability: Your serverless applications are not confined to a single cloud's proprietary FaaS implementation. Since they are packaged as standard OCI containers running on Kubernetes, they can be deployed on any conformant Kubernetes cluster—whether on AWS, GCP, Azure, or on-premises.

- Optimized Resource Utilization: This model enables "scale-to-zero," where idle applications consume zero CPU and memory resources (beyond the minimal overhead of the framework itself). For applications with intermittent or highly variable traffic patterns, the cost savings from reclaimed compute capacity can be substantial.

This architecture yields a portable, efficient, and developer-friendly platform. It allows development teams to move quickly while the organization maintains strict governance over its infrastructure and security posture.

The market reflects this growing interest. The serverless container space—the intersection of Kubernetes and serverless principles—is expanding rapidly. It was valued at USD 4.29 billion in 2026 and is projected to reach USD 11.88 billion by 2030, a 29% CAGR. This growth is driven by the pursuit of cost-efficiency and on-demand, event-driven scaling.

For those considering this architectural shift, understanding the fundamentals is crucial. Our guide on what serverless architecture is provides essential context before we delve into the technical implementation details.

Choosing Your Serverless Kubernetes Framework

Once you commit to running serverless on Kubernetes, the next critical decision is selecting the framework. This choice defines the architectural patterns, developer experience, and operational workload for your team.

While numerous tools exist, the landscape is dominated by three key players: Knative, OpenFaaS, and KEDA. Each offers a different approach to solving the serverless puzzle on Kubernetes.

The right decision depends on your operational capacity, desired developer experience, and the specific use cases you aim to address. This flowchart helps frame the high-level decision between a managed FaaS platform and a self-hosted serverless on Kubernetes solution.

If your goal is deep infrastructure control combined with serverless benefits, a Kubernetes-based framework is the logical choice. Let's dissect the technical specifics of each.

Knative: The Comprehensive Platform

Knative is a powerful, modular platform for building serverless capabilities directly on Kubernetes. Backed by major tech companies, it extends Kubernetes with a set of Custom Resource Definitions (CRDs) to create a complete serverless environment.

Knative is not just a function-runner; it's designed to manage any containerized workload in a serverless fashion. It consists of two primary components:

- Serving: This is the core runtime component. It manages the entire lifecycle of your workloads by handling request-driven autoscaling (including scale-to-zero), creating network endpoints via an ingress gateway (like Kourier or Istio), and managing point-in-time snapshots of your code and configuration as immutable

Revisions. This built-in revision management makes advanced deployment strategies like blue/green and canary rollouts declarative and straightforward to implement. - Eventing: This component provides the infrastructure for building event-driven architectures. It establishes a decoupled system where event producers (e.g., a Kafka

Source, aPingSourcefor cron jobs, or a GitHub webhook) are unaware of event consumers. You can construct complex event flows usingTriggersandBrokersto route events to your serverless containers without tight coupling.

Knative's deep integration with Kubernetes makes it feel like a natural extension of the platform. This makes it an ideal choice for platform engineering teams aiming to build a sophisticated internal serverless platform, offering granular control over traffic splitting, revisions, and event routing. The trade-off is higher operational complexity, requiring a strong command of Kubernetes concepts.

OpenFaaS: The User-Friendly Suite

If Knative is a serverless operating system, OpenFaaS is a user-friendly application suite focused on developer productivity. Its primary goal is to simplify the deployment of functions and microservices on Kubernetes, minimizing the learning curve. The core philosophy is "function-first," prioritizing ease of use and a rapid developer workflow.

OpenFaaS provides a clean web UI and a powerful CLI (faas-cli) that abstract away much of the underlying Kubernetes complexity. A developer can create a new function from a template, package it into a container image, and deploy it to the cluster with a few simple commands.

OpenFaaS is exceptionally well-suited for environments where the main objective is to empower developers to ship event-driven services quickly, without requiring them to become Kubernetes experts. Its focus on simplicity and broad language support makes it an excellent entry point for teams adopting the serverless on Kubernetes model.

Architecturally, OpenFaaS uses an API Gateway to route incoming requests to the appropriate functions and a controller, faas-netes, to manage the underlying Kubernetes Deployments and Services. It integrates natively with Prometheus, using metrics like requests-per-second to autoscale function replicas to meet demand.

KEDA: The Specialized Autoscaler

KEDA, or Kubernetes Event-Driven Autoscaling, takes a different approach. It is not a complete serverless platform. Instead, it is a lightweight, single-purpose component that excels at one thing: event-driven autoscaling.

KEDA functions as a Kubernetes metrics server. It monitors external event sources, such as message queues (RabbitMQ, SQS), streaming platforms (Kafka, Kinesis), or even databases (PostgreSQL, MySQL). When the number of events in a source (e.g., messages in a queue) exceeds a threshold, KEDA signals the standard Kubernetes Horizontal Pod Autoscaler (HPA) to scale up the target workload's pods. Once the event source is drained, KEDA scales the workload back down to zero.

KEDA's power lies in its design:

- It Augments Existing Workloads: You can use KEDA to add event-driven, scale-to-zero capabilities to any existing Kubernetes workload, including

Deployments,StatefulSets, orJobs—not just functions. - It’s Pluggable: KEDA integrates seamlessly with other tools. You can use it alongside a framework like OpenFaaS or even with custom-built controllers to provide more sophisticated, event-driven scaling logic.

- It’s Lightweight: Its focused scope results in a minimal operational footprint compared to a full platform like Knative.

Choosing the right framework depends entirely on your goals.

Technical Comparison of Serverless Kubernetes Frameworks

| Feature | Knative | OpenFaaS | KEDA |

|---|---|---|---|

| Primary Goal | Comprehensive serverless platform for containers | Developer-friendly FaaS platform | Specialized event-driven autoscaler |

| Core Abstraction | Service, Revision, Route, Broker |

Function |

ScaledObject, Trigger |

| Scale-to-Zero | Yes, based on HTTP traffic inactivity | Yes, based on request inactivity/RPS | Yes, based on metrics from external event sources |

| Eventing | Built-in, broker/trigger model for complex routing | Via API Gateway & asynchronous function invocation | Core feature, with 50+ built-in Scalers |

| Complexity | High; requires deep K8s knowledge | Low; abstracts K8s complexity | Low; lightweight and focused |

| Best For | Building an internal PaaS with advanced features | Rapid developer onboarding and function-centric use cases | Adding event-driven scaling to existing K8s workloads |

For a comprehensive, Kubernetes-native platform with advanced traffic management, Knative is the heavyweight champion. For rapid developer adoption and simplicity, OpenFaaS wins on friendliness. And for adding precise, event-driven scaling to any container, KEDA is the perfect specialized tool for the job.

Now, let's move from theory to practical design, architecting a serverless application on Kubernetes.

Implementing a serverless framework on Kubernetes involves more than a helm install command. It demands a shift in application design, event flow management, and performance tuning. We will trace an event's lifecycle to understand the key architectural patterns.

The foundation is Kubernetes, and its widespread adoption makes it a reliable choice. A recent CNCF survey revealed that 96% of organizations are using Kubernetes, solidifying its status as the de facto standard for container orchestration. Platform teams trust it for its maturity and battle-tested reliability.

Tracing an Event From Source to Pod

Consider a common e-commerce scenario: processing a new order submitted to a Kafka topic. In a traditional architecture, a consumer service would run 24/7, polling the topic and consuming resources continuously. In our serverless model, the order-processing function is scaled to zero, consuming no resources until an order arrives.

Here's the sequence of events when a new message hits the Kafka topic:

- Event Detection: The serverless framework's eventing component, such as a Knative

KafkaSourceor a KEDAScaledObjectconfigured for a Kafka trigger, is actively monitoring the topic. It detects the new message and initiates the process. - Controller Activation: The event source notifies the framework's controller (e.g., the Knative Activator or the KEDA operator) that there is work pending for a specific function.

- Scale-Up Decision: The controller checks the state of the target function's

Deploymentand finds it has zero replicas. It then invokes the Kubernetes API server to patch theDeployment's replica count to 1 (or more, depending on configuration and event backlog). - Pod Scheduling: The Kubernetes scheduler assigns the new pod to a suitable worker node. The

kubeleton that node pulls the container image (if not already cached) and starts the container. - Event Delivery: Once the pod is running and its readiness probe passes, the framework routes the event (the Kafka message) to it for processing. The function executes its business logic. After processing is complete and a configurable idle period elapses, the controller scales the

Deploymentback down to zero replicas.

This entire sequence, from event detection to a ready pod, is known as a "cold start." While it is the key to resource efficiency, managing the associated latency is a primary architectural challenge.

Key Architectural Design Patterns

You cannot simply redeploy monolithic applications as functions. A robust serverless system on Kubernetes relies on specific design patterns for scalability and maintainability.

Adopting these patterns early is crucial for managing technical debt and maintaining long-term architectural agility.

- Event-Driven Microservices: This is the foundational pattern. Services communicate asynchronously by publishing and subscribing to events via a message bus (e.g., Kafka, RabbitMQ, NATS) rather than making direct, synchronous API calls. This decouples services, allowing them to scale independently and preventing cascading failures.

- Function Composition (Chaining): Avoid building large, monolithic functions. Decompose complex workflows into a chain of small, single-purpose functions. For instance, an "order processing" workflow can be composed of

validate-order,process-payment, andupdate-inventoryfunctions. Each function is triggered by an event produced by the preceding one, creating a distributed workflow. - Sidecar for Observability: Keep business logic clean and focused. Instead of embedding code for logging, metrics, and tracing in every function, inject an observability sidecar container into each function's pod. This container can handle log shipping, metric scraping, and trace propagation automatically, separating concerns effectively.

A critical architectural constraint for serverless is statelessness. Functions must not store state in local memory or on disk between invocations. Any required state, such as user sessions or transaction data, must be externalized to a durable service like a database (e.g., Redis, PostgreSQL), cache, or object store (e.g., MinIO, S3).

Mitigating Cold Start Latency

A multi-second cold start may be acceptable for asynchronous background jobs, but it's unacceptable for user-facing APIs. Fortunately, several technical levers can be pulled to mitigate this latency.

One of the most effective strategies is configuring provisioned concurrency. Frameworks like Knative allow you to set a minScale value greater than zero. For a Knative Service, this would look like: annotations: { autoscaling.knative.dev/minScale: "1" }. This instructs the controller to maintain a minimum number of warm, ready-to-serve pods at all times, effectively eliminating cold starts for those instances at the cost of idle resource consumption.

For managing traffic ingress to these functions, the Kubernetes Gateway API offers a more expressive and role-oriented alternative to the traditional Ingress API.

Another significant factor is your container image size. Smaller images lead to faster pull times and quicker startups. Always use multi-stage Dockerfiles to produce minimal final images. Start with a lean base image like distroless or Alpine Linux, and ensure your application runtime is optimized for fast startup. These practical optimizations are essential for meeting performance SLAs in a serverless on Kubernetes environment.

Mastering Operations for Your Serverless Platform

When you run serverless on Kubernetes, you assume full operational responsibility. Unlike a managed FaaS offering where the cloud provider handles underlying operations, your platform team is now accountable for the Day 2 operations that ensure reliability, performance, and security.

This is a double-edged sword: you gain complete control but also inherit the operational burden. Excelling in these domains is what distinguishes a fragile proof-of-concept from a production-grade platform developers trust.

Fine-Tuning Scaling and Performance

Default autoscaling configurations are a starting point, but production workloads require fine-tuning. The primary performance challenge in any serverless environment is the cold start. To mitigate it, you must move beyond defaults and implement specific strategies.

Establish a warm container pool by configuring a minimum replica count. Frameworks like Knative allow you to set a minScale annotation (e.g., autoscaling.knative.dev/minScale: "1") to ensure at least one pod is always running, ready to serve requests instantly. This eliminates cold starts for initial traffic but incurs the cost of idle resources.

Further tune performance by adjusting concurrency settings. In Knative, the containerConcurrency parameter defines how many concurrent requests a single pod can handle before the autoscaler adds another replica. Setting this value based on empirical load testing allows you to optimize resource utilization and keep pods "hot" for longer, reducing the frequency of scale-to-zero events. For a deeper dive, learn more about autoscaling in Kubernetes in our article.

Hardening Your Security Posture

Operating a multi-tenant serverless platform on a shared Kubernetes cluster introduces unique security challenges. You must secure both the platform components and the arbitrary code developers deploy. Kubernetes-native security primitives are your primary tools.

Implement workload isolation using NetworkPolicies. These act as pod-level firewalls, defining ingress and egress rules based on labels, namespaces, or IP blocks. This prevents lateral movement by an attacker if a single function is compromised.

Enforce the principle of least privilege with Role-Based Access Control (RBAC). Create granular Roles and ClusterRoles that grant only the minimum permissions required by the serverless framework's components and the deployed functions. Combine this with Pod Security Admission (PSA), using policies like baseline or restricted to prevent pods from running with elevated privileges.

Do not neglect application-level security. The function code itself is a primary attack vector. Integrate static application security testing (SAST) and software composition analysis (SCA) tools directly into your CI/CD pipeline to scan for vulnerabilities in your code and its dependencies before deployment.

Achieving Full-Stack Observability

In a dynamic environment of ephemeral, event-driven functions, traditional monitoring tools are insufficient. A comprehensive observability solution requires correlating signals across three pillars: metrics, logs, and traces.

- Metrics with Prometheus: Instrument your serverless framework and functions to expose metrics in the Prometheus format. Track key indicators such as invocation counts, execution duration, error rates, and cold start latency. Use these metrics to build dashboards in Grafana and configure alerts for anomalous behavior.

- Distributed Tracing with Jaeger: When a single user request triggers a complex chain of functions, distributed tracing is indispensable. Instrument your code with an OpenTelemetry SDK to propagate trace context across function invocations. Tools like Jaeger can then visualize the end-to-end request flow, pinpointing bottlenecks and error sources within the distributed system.

- Logging with Fluentd: Aggregate logs from all function pods into a centralized logging backend like Elasticsearch. A log-forwarding agent like Fluentd or Fluent Bit, deployed as a

DaemonSet, is critical for collecting logs from ephemeral pods before they are terminated.

This observability trifecta enables powerful debugging workflows. A spike in an error metric can be correlated with specific distributed traces, which in turn lead directly to the relevant logs needed to diagnose the root cause.

Automating Deployments with CI/CD

Manual deployment of serverless functions is error-prone and unscalable. A robust CI/CD pipeline is non-negotiable for achieving velocity and reliability. Tools like GitLab CI or the Kubernetes-native Tekton can automate the entire lifecycle.

A typical serverless CI/CD pipeline includes these stages:

- Commit: A developer pushes code changes to a Git repository, triggering the pipeline.

- Build: The pipeline builds the function code, runs unit tests, and packages it into a versioned OCI container image.

- Test: The new image is subjected to automated integration tests and security scans (SAST/SCA).

- Deploy: Upon successful validation, the pipeline automatically applies the updated serverless resource manifest (e.g., a Knative

ServiceYAML) to the Kubernetes cluster, triggering a safe rollout (e.g., canary).

This automation ensures every deployment is consistent, rigorously tested, and secure. It provides developers a streamlined path to production while allowing the platform team to enforce governance and quality gates.

Your Implementation Roadmap

Adopting serverless on Kubernetes is a strategic initiative, not a weekend project. It requires a phased approach that builds on your team's existing capabilities and delivers incremental value.

This four-phase roadmap provides a structured path from initial assessment to a fully governed, enterprise-wide serverless platform.

Phase 1: Assess and Plan

Before writing any YAML, conduct a thorough assessment of your team's Kubernetes maturity. Are they proficient with kubectl and basic resources, or do they have deep experience with operators and CRDs? The answer will heavily influence your choice of framework.

Next, identify a suitable low-risk pilot project. The ideal candidate is an asynchronous, non-critical workload. Examples include:

- An image resizing function triggered by an S3/MinIO

putevent. - A data enrichment job that processes messages from a RabbitMQ queue.

- A webhook handler for processing notifications from a third-party service like Stripe or GitHub.

These projects provide a safe environment for learning and experimentation. Based on the pilot's requirements and your team's skills, select your initial framework. For teams with strong Kubernetes expertise seeking advanced traffic management, Knative is a strong contender. For teams prioritizing developer velocity and simplicity, OpenFaaS may be a better starting point.

Phase 2: Build the Pilot

With a plan in place, begin implementation. Isolate your experiment by creating a dedicated Kubernetes namespace for the pilot. This prevents interference with existing applications and simplifies resource tracking and cleanup.

Deploy your chosen serverless framework into this namespace, following the official installation documentation precisely. Pay close attention to the RBAC permissions and CRDs being installed. Once the framework is operational, refactor and deploy your pilot application onto the platform.

The goal of this phase is to achieve a working end-to-end flow. Verify that the function can be triggered by an event and, crucially, that it scales down to zero when idle. This functional validation is the key success metric for this phase.

Phase 3: Instrument and Optimize

With the pilot running, the next step is to make its behavior visible. You cannot optimize what you cannot measure. Integrate your observability stack—Prometheus for metrics, Fluentd for logs, and Jaeger for traces—with the pilot application and the serverless framework itself.

This is the phase where you establish performance baselines. Collect data on critical metrics: P95 and P99 cold start latency, request duration, and resource consumption (CPU/memory) per invocation.

Armed with this data, begin optimization. Experiment with different container base images (distroless vs. Alpine vs. slim) to measure the impact on cold start times. Tune concurrency settings to find the optimal balance between resource utilization and responsiveness. Test different minScale configurations (e.g., 0 vs. 1 vs. 2) to quantify the trade-off between reduced latency and increased idle cost. This is the process of turning raw data into actionable performance and cost improvements.

Phase 4: Scale and Govern

After optimizing the pilot, prepare for broader adoption. Codify your learnings into internal best practice documents and create a set of standardized function templates in a shared Git repository. These assets will dramatically lower the barrier to entry for other teams.

At this stage, managed services can accelerate your progress. The managed Kubernetes market is projected to reach USD 1,674.5 million by 2025 as organizations seek to offload operational burdens. A partner like OpsMoon can provide flexible engineering expertise and strategic guidance, reducing migration costs and bridging skill gaps. This support is vital; one study found that 21% of developers using Kubernetes were unsure of its benefits—a gap that expert guidance can close. You can find more details about the managed Kubernetes market trends.

Finally, develop a clear rollout strategy. Establish governance policies, define support channels, and create a formal process for onboarding new teams. Showcase the success metrics from your pilot—cost savings, improved deployment frequency, reduced latency—to build excitement and secure buy-in from the wider organization. A successful pilot, backed by hard data and clear documentation, is your most effective tool for scaling serverless on Kubernetes across the enterprise.

Frequently Asked Questions

Adopting serverless on Kubernetes is a powerful but complex proposition. It merges two sophisticated ecosystems, naturally raising many questions. Here are direct, technical answers to the most common queries from engineers and technology leaders.

Is Serverless On Kubernetes Just A More Complicated PaaS?

Not exactly, although the comparison is understandable. Both a PaaS (Platform as a Service) and a serverless platform abstract away underlying infrastructure. However, they are designed for different workload types. A traditional PaaS (like Heroku or Cloud Foundry) is typically optimized for long-running, always-on applications and services.

Serverless on Kubernetes, by contrast, is specifically engineered for ephemeral, event-driven workloads. Its defining characteristic is the ability to scale to zero, a feature not native to most PaaS architectures. You are essentially implementing a FaaS (Function as a Service) or CaaS (Container as a Service) model on your own Kubernetes cluster.

You gain the granular, pay-per-use cost model of serverless while retaining the control, portability, and open ecosystem of Kubernetes. A generic PaaS often imposes a more rigid, opinionated structure. This approach offers ultimate flexibility.

How Do You Manage Cold Starts In A Kubernetes Serverless Environment?

Managing cold start latency is arguably the most critical operational task in a self-hosted serverless environment. A cold start occurs when a request or event arrives for a function that has been scaled to zero replicas. The system must then execute a sequence of steps—API call to the controller, pod scheduling, image pull, and container initialization—before processing the request.

Fortunately, several well-established techniques can mitigate this latency:

- Provisioned Concurrency: Frameworks like Knative support a

minScaleannotation. Setting this to1or higher configures the autoscaler to always maintain a minimum number of warm pods. This effectively eliminates cold starts for those instances at the cost of consuming idle resources. - Container Image Optimization: Image size directly impacts startup time. Employ multi-stage Dockerfiles to create minimal production images. Use small base images like

gcr.io/distroless/static-debian11oralpine. Ensure your container registry is located geographically close to your cluster to minimize network latency during image pulls. - Efficient Runtimes and AOT Compilation: Language and runtime choice have a significant impact. Compiled languages like Go and Rust offer extremely fast startup times. For JVM-based applications, leverage Ahead-Of-Time (AOT) compilation with frameworks like Quarkus or Spring Native (which uses GraalVM) to dramatically reduce startup times from seconds to milliseconds.

- Concurrency Tuning: Configure the number of concurrent requests a single pod can handle (e.g., Knative's

targetorcontainerConcurrencysettings). Tuning this based on application performance can keep pods active and "hot" for longer periods, reducing the frequency of scaling down to zero.

What Are The Biggest Technical Hurdles In Adoption?

The most significant hurdles are the steep learning curve and the operational overhead. Unlike a managed FaaS offering, running serverless on Kubernetes means you own and operate the entire stack.

Teams commonly encounter these challenges:

- Deep Kubernetes Expertise: A thorough understanding of Kubernetes networking (CNI), storage (CSI), security (RBAC, Pod Security Policies/Admission), and the control plane is non-negotiable. You cannot effectively operate a platform built on an infrastructure you don't fully comprehend.

- Framework Mastery: Each serverless framework (Knative, OpenFaaS, etc.) introduces its own set of CRDs, controllers, and operational patterns. Your team must learn to install, configure, upgrade, and debug these components, which adds another layer of complexity.

- Observability Integration: Correlating signals from thousands of ephemeral, event-driven functions is a significant engineering challenge. Implementing and maintaining a robust observability stack (metrics, tracing, logging) that provides a coherent view of the system's behavior requires specialized expertise.

- Developer Experience and Tooling: You become responsible for the entire developer workflow. This includes providing effective local development and debugging tools (e.g.,

skaffold, Telepresence), creating standardized CI/CD pipelines, and writing clear documentation and function templates.

How Does This Model Impact Total Cost of Ownership?

The Total Cost of Ownership (TCO) for serverless on Kubernetes can be significantly lower than public cloud FaaS, but this is contingent on achieving a certain scale and understanding that you are shifting costs, not eliminating them. You trade a provider's per-invocation and per-GB-second fees for the direct costs of your cluster's compute, storage, networking, and the engineering talent required to manage it.

Initially, your costs may increase due to the overhead of the Kubernetes control plane and any provisioned concurrency (warm pods). However, as your workload scales, the economics shift. The ability to achieve high-density workload packing on a fixed-cost cluster creates economies of scale that are unattainable with public FaaS pricing models.

Ultimately, your TCO is a function of workload density, operational automation, and the engineering cost to build and maintain the platform. You are exchanging a high variable cost (pay-per-use) for a higher fixed operational cost.

Ready to implement a robust serverless on Kubernetes strategy but need the right expertise? OpsMoon connects you with the top 0.7% of remote DevOps engineers to accelerate your projects. Start with a free work planning session to map your roadmap and find the perfect talent for your infrastructure needs. Visit us at https://opsmoon.com to get started.

Leave a Reply