When executing a DevOps tools comparison, you face a critical architectural decision. Do you commit to a unified platform like GitLab for its integrated developer experience and single data model, or do you assemble a best-of-breed stack using specialized tools like Jenkins, Terraform, and Snyk for maximum flexibility and performance? There is no universally correct answer. The optimal path is a function of your team's existing skill set, operational capacity, and specific project requirements. Making the right architectural choice here is the difference between high-velocity engineering and a high-friction, low-output delivery lifecycle.

Navigating The 2026 DevOps Tooling Landscape

Selecting the right tooling is a strategic decision that directly impacts innovation velocity and competitive standing. The modern DevOps ecosystem is a complex, fragmented landscape where engineering leaders often struggle with tool sprawl, integration debt, and the risk of vendor lock-in. Before evaluating specific features, you must establish clear, first-principle objectives for your technology stack. What operational problems are you trying to solve? Are you optimizing for developer velocity, infrastructure cost, or security posture?

The market's explosive growth underscores the mission-critical nature of this domain. Valued at USD 12.66 billion in 2024, the global DevOps market is projected to reach USD 86.16 billion by 2034—a 580% increase. This signals a fundamental industry shift towards automated, integrated software delivery lifecycles. For deeper quantitative analysis, review the full DevOps market research from Polaris Market Research.

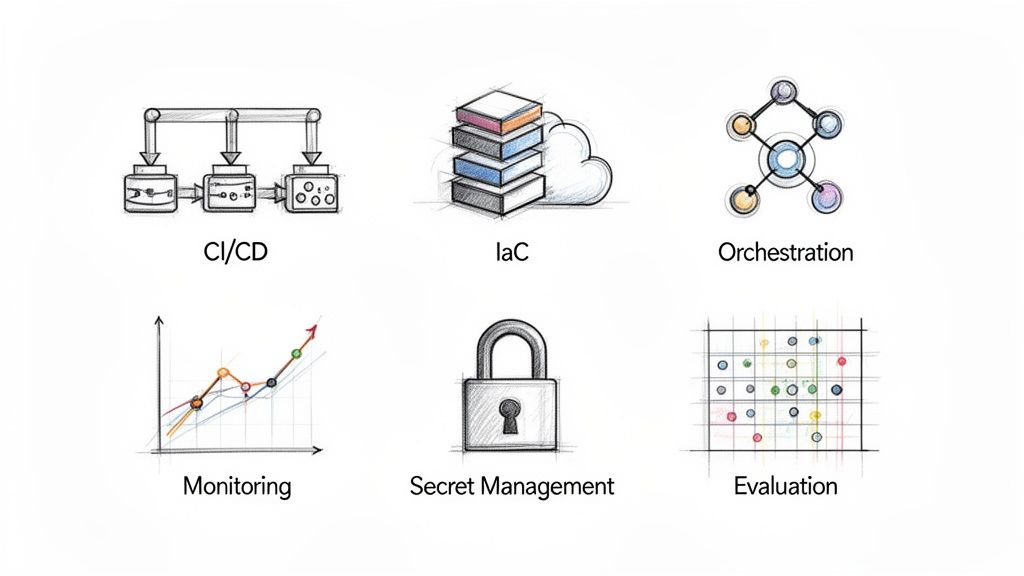

Defining The Core Tool Categories

To construct a cohesive, interoperable stack, you must decompose the landscape into functional categories. This structured methodology prevents tool redundancy and ensures complete lifecycle coverage. This guide organizes the comparison around five fundamental pillars:

- Continuous Integration/Continuous Delivery (CI/CD): The core automation engine for compiling, testing, and deploying code artifacts.

- Infrastructure as Code (IaC): The practice of defining and managing infrastructure through version-controlled, machine-readable definition files. This ensures idempotency and auditability.

- Orchestration and Containerization: Automates the deployment, scaling, networking, and lifecycle management of containerized applications.

- Security (DevSecOps): Involves "shifting security left" by integrating automated security controls and testing directly into the CI/CD pipeline.

- Monitoring and Observability: Provides deep, data-driven visibility into system performance, application health, and user experience through metrics, logs, and traces.

Choosing a tool isn't just about features; it's about adopting its underlying philosophy. Whether it's declarative vs. imperative or agent-based vs. agentless, the tool's core architecture will shape your team's workflows and operational model for years to come.

With these categories defined, we can proceed with a technical comparison of the leading tools, focusing on how each solves specific engineering problems to help you architect a modern, efficient, and resilient tech stack.

A Technical Showdown Of Core DevOps Tools

Selecting the right tool for a specific function is where DevOps strategy is implemented. A meaningful devops tools comparison requires moving beyond marketing claims to analyze the architectural philosophies and technical trade-offs that define each platform.

This analysis focuses on three core domains: Continuous Integration/Continuous Delivery (CI/CD), Infrastructure as Code (IaC), and Orchestration. We will compare the dominant tools by focusing on technical differentiators that impact team velocity, scalability, and operational overhead.

CI/CD: The Engine Of Automation

The CI/CD pipeline is the central nervous system of modern software delivery, automating the build, test, and deployment lifecycle. Your choice of CI/CD tool dictates how your team defines, executes, and observes these critical workflows.

While the broader DevOps market shows GitHub with 88% market adoption, the CI/CD segment remains contested. Jenkins, a long-standing incumbent, maintains a significant 46.35% market share, demonstrating the continued demand for highly extensible, specialized tooling.

Jenkins: Extensibility Is Everything

Jenkins is a battle-hardened automation server known for its unparalleled flexibility. Its power stems from a vast ecosystem of over 1,800 community-developed plugins, enabling integration with virtually any tool or system.

This extensibility comes with significant operational responsibility. You are responsible for managing the Jenkins controller, worker nodes (agents), and the dependency graph of plugins, including security patching and version compatibility. The Jenkinsfile pipeline-as-code syntax, based on a Groovy DSL, provides powerful programmatic control but presents a steeper learning curve than declarative YAML-based alternatives.

Key Differentiator: Jenkins operates on a controller-agent architecture. A central controller orchestrates jobs, which are executed by distributed agents. This model scales effectively but requires active management of agent capacity, environment provisioning (e.g., using Docker or dedicated VMs), and security isolation between jobs.

GitHub Actions: The Ecosystem Is The Moat

GitHub Actions is deeply integrated into the GitHub platform, offering a low-friction developer experience. Its primary advantage is its native, event-driven architecture. Workflows are triggered by repository events (e.g., on: push, on: pull_request), creating a seamless, context-aware CI/CD process.

Actions leverages its open-source Marketplace, where reusable actions can be composed to perform common tasks, such as actions/setup-node or aws-actions/configure-aws-credentials. This component-based approach significantly accelerates pipeline development. Workflows are defined declaratively in YAML files within the .github/workflows directory, ensuring they are version-controlled alongside the application code. For many organizations, an initial GitHub vs GitLab comparison is the first major architectural decision.

GitLab CI: The Integrated Platform

GitLab CI exemplifies the all-in-one platform philosophy. By bundling SCM, CI/CD, package registries, and security scanning into a single application, it provides a unified interface for the entire software development lifecycle.

Key features include the integrated container registry and Auto DevOps, which attempts to automatically generate a complete CI/CD pipeline for standard projects. Like Actions, GitLab CI uses a declarative .gitlab-ci.yml file stored in the root of the repository. It utilizes a "Runner" architecture, analogous to Jenkins agents, which can be self-hosted for greater control or consumed as a managed service.

For a more granular analysis of this category, see our guide to the best CI/CD tools.

This matrix provides a high-level summary of the key tools across these core categories.

At-A-Glance DevOps Tool Comparison Matrix

| Category | Tool | Key Technical Differentiator | Best For | Integration Ecosystem |

|---|---|---|---|---|

| CI/CD | Jenkins | Plugin-driven architecture with Groovy DSL | Highly customized, complex pipelines requiring programmatic control | Massive (1,800+ plugins) |

| CI/CD | GitHub Actions | Native Git event integration and reusable Marketplace actions | Teams fully invested in the GitHub ecosystem | Strong, Marketplace-driven |

| CI/CD | GitLab CI | All-in-one DevOps platform with built-in SCM and security | Teams seeking a single, unified toolchain to reduce integration overhead | Good, focused on the GitLab platform |

| IaC | Terraform | Cloud-agnostic state management via HCL | Multi-cloud or hybrid environments requiring a consistent workflow | Extremely broad provider network |

| IaC | Pulumi | Uses general-purpose languages (Python, Go, TS) for IaC | Dev-centric teams wanting to leverage programming constructs like loops, functions, and classes | Leverages existing cloud SDKs |

| IaC | AWS CloudFormation | Native AWS service integration and IAM-based controls | AWS-only infrastructure requiring day-one support for new services | Deep but limited to AWS services |

| Orchestration | Kubernetes | Declarative, API-driven control plane for distributed systems | Complex, scalable microservices architectures | The de facto industry standard |

| Orchestration | Docker Swarm | Simple, native Docker tooling integrated with the Docker CLI | Small-scale or simple container workloads with low operational overhead | Limited to the Docker ecosystem |

This table serves as a quick reference, but the final decision depends on the specific technical nuances explored below.

Infrastructure as Code: Provisioning At Scale

Infrastructure as Code (IaC) is a foundational DevOps practice that enables versionable, testable, and repeatable infrastructure provisioning, thereby eliminating configuration drift. The primary architectural decision revolves around declarative versus imperative models and cloud-agnostic versus cloud-native tooling.

Terraform: The Cloud-Agnostic Standard

Terraform, by HashiCorp, is the dominant tool for cloud-agnostic provisioning. It uses a declarative configuration language, HCL (HashiCorp Configuration Language), to define the desired end state of your infrastructure.

Its core technical strengths include:

- State Management: Maintains a

state file(e.g.,terraform.tfstate) that maps configuration to real-world resources, enabling intelligent change planning and dependency resolution. - Provider Ecosystem: A vast network of providers allows it to manage resources across AWS, Azure, GCP, and even non-cloud platforms like Kubernetes or Datadog.

- Execution Plan: The

terraform plancommand provides a dry run, generating a detailed execution graph that shows precisely what resources will be created, modified, or destroyed.

Terraform is the go-to for managing complex, multi-cloud or hybrid infrastructures that require a unified provisioning workflow.

Pulumi: Real Programming Languages For IaC

Pulumi offers a fundamentally different approach, allowing teams to define infrastructure using general-purpose programming languages like Python, TypeScript, Go, or C#. This paradigm shift enables developers to apply familiar software engineering principles—such as loops, conditionals, functions, and unit testing—to infrastructure code.

This is particularly advantageous for creating dynamic or complex infrastructure where configurations can be programmatically generated. Under the hood, Pulumi still employs a declarative desired-state model, converging the power of imperative code with the reliability of a declarative engine.

AWS CloudFormation: The Native Solution

AWS CloudFormation is the native IaC service for the AWS ecosystem. Its primary benefit is deep, day-one integration with all AWS services, governed by AWS IAM for granular permissions. Infrastructure is defined as a "stack" using YAML or JSON templates.

However, its strength is also its weakness: vendor lock-in. For multi-cloud strategies, CloudFormation necessitates adopting different tools for different environments. While powerful within its ecosystem, its verbose syntax and the complexities of managing cross-stack dependencies can introduce significant architectural overhead.

Orchestration: Managing Containerized Workloads

In a microservices-driven architecture, container orchestration is non-negotiable for managing applications at scale. These platforms automate the deployment, scaling, self-healing, and networking of containerized workloads.

Kubernetes: The De Facto Standard

Kubernetes (K8s) is the undisputed industry standard for container orchestration. It provides a powerful, extensible API-driven control plane for defining complex application topologies, storage volumes, and network policies.

Its architecture is based on a declarative model. You define the desired state of your application in YAML manifests (e.g., "run three replicas of this container image and expose it via a load balancer"), and the Kubernetes controllers work continuously to reconcile the cluster's current state with your desired state. This self-healing capability is a core feature.

Key Differentiator: Kubernetes' complexity is a direct result of its power. Its vast feature set can manage applications at immense scale but introduces a steep learning curve for cluster setup, operational management, and security hardening.

Docker Swarm: Simplicity And Ease Of Use

Docker Swarm is Docker's native orchestration engine. Its primary value proposition is simplicity. For teams already proficient with the Docker CLI and Docker Compose, the learning curve for Swarm is minimal.

Integrated directly into the Docker Engine, Swarm provides basic clustering, service discovery, and rolling updates. It lacks the advanced capabilities of Kubernetes, such as sophisticated storage orchestration (CSI), network policies (CNI), or a service mesh ecosystem. However, it is an excellent choice for smaller-scale, less complex applications where the operational overhead of Kubernetes would be prohibitive.

An Evaluation Framework For Choosing The Right Tools

A superficial devops tools comparison based on feature checklists is a common pitfall. This approach often leads to selecting tools that, while powerful on paper, are misaligned with your team's skills, impose hidden costs, or fail to integrate with your existing environment.

To make a durable choice, you must implement a structured evaluation framework. The objective is to shift the question from "What can this tool do?" to "How will this tool perform for our team in our specific context?" This involves a holistic analysis of the tool's entire lifecycle, from implementation and integration costs to long-term maintenance and scalability.

By formulating the right technical and operational questions upfront, you can build a decision matrix that accurately reflects your organization's goals, constraints, and engineering culture.

Calculate The True Total Cost Of Ownership

The license fee is merely the entry point. The Total Cost of Ownership (TCO) encompasses all direct and indirect expenses incurred throughout the tool's lifecycle. These are the costs that are often overlooked during initial evaluations.

Consider an open-source tool like Jenkins. While there are no licensing fees, it can become a significant cost center when you account for the engineering hours required for installation, configuration, ongoing maintenance, plugin management, and security hardening.

A comprehensive TCO analysis must include:

- Implementation and Integration: Quantify the engineer-weeks required to integrate the tool into your existing CI/CD pipelines and workflows. Does it require custom scripting, API connectors, or middleware development?

- Training and Onboarding: What is the learning curve? Factor in the cost of formal training, the time spent on documentation, and the initial productivity dip as the team adapts to new workflows and concepts.

- Maintenance and Upgrades: Who is responsible for patching, version upgrades, and security? For self-hosted tools, this includes the underlying infrastructure costs (compute, storage, networking) and the personnel costs for system administration.

- Operational Overhead: What is the performance impact of the tool's agent or controller on your infrastructure? A resource-intensive monitoring agent could necessitate provisioning larger, more expensive instances across your entire fleet, driving up cloud costs.

Assess Community And Ecosystem Support

A tool's long-term viability is directly proportional to the health of its community and ecosystem. A vibrant ecosystem provides a rich knowledge base, a wide array of third-party integrations, and a larger talent pool of experienced engineers.

When you choose a tool, you're not just buying software; you're investing in its community. An active ecosystem provides a safety net of shared knowledge, pre-built solutions, and a roadmap driven by real-world use cases, which is often more valuable than any single feature.

Evaluate the ecosystem with these technical criteria:

- Knowledge Base: Is the official documentation comprehensive, accurate, and up-to-date? Are there active forums, Slack/Discord channels, or community blogs where advanced technical problems are being discussed and solved?

- Integration Marketplace: Does the tool have a formal marketplace for plugins, extensions, or modules, like the GitHub Actions Marketplace or the Jenkins plugin repository? A mature marketplace can save thousands of hours of bespoke development.

- Talent Pool: How difficult is it to hire engineers with deep expertise in this tool? Adopting a niche technology can create a significant hiring and retention challenge.

Analyze Scalability And Performance Limits

A tool that excels in a startup environment may fail catastrophically at enterprise scale. You must rigorously analyze a tool's architecture for potential bottlenecks and its ability to scale horizontally and vertically. This is particularly critical for core CI/CD and infrastructure management systems, where performance directly impacts developer productivity.

Ask these specific technical questions:

- What is its architectural model (e.g., agent-based vs. agentless, centralized controller vs. decentralized)? What are the performance and security implications of this model?

- How does its control plane handle high-throughput scenarios with thousands of concurrent jobs or managed nodes? Is it susceptible to single points of failure?

- Does its declarative syntax and state management model align with your infrastructure's complexity and scale? How does it handle large, complex state files or configurations?

- What are the documented failure modes under load, and what are the mechanisms for resilience and recovery?

Integrating Security Into Your DevOps Pipeline

Traditional security models that perform a single security audit at the end of the development cycle are obsolete. A modern devops tools comparison must prioritize DevSecOps. The core principle is to "shift security left" by embedding automated security controls and tests directly into the CI/CD pipeline, making security a continuous, developer-centric practice rather than a final gate.

This is a market-defining trend. The DevSecOps market is projected to reach USD 41.66 billion by 2030, with adoption rates jumping from 27% to 36% in recent years as organizations recognize that secure code is a fundamental component of quality engineering.

SAST And Dependency Scanning Tools

To implement DevSecOps, you need tools that can be automated and scripted within your pipeline. Two critical categories are Static Application Security Testing (SAST) and Software Composition Analysis (SCA) for dependency scanning, dominated by tools like SonarQube and Snyk.

SonarQube: Automated Code Quality and Security Gates

SonarQube analyzes source code to identify security vulnerabilities (e.g., SQL injection, cross-site scripting), bugs, and code smells. Its primary value in a CI/CD context is the implementation of quality gates. You can configure a pipeline step in Jenkins or GitLab CI to fail the build if the SonarQube analysis introduces any new "Critical" or "High" severity vulnerabilities, thus preventing insecure code from being merged or deployed.

Snyk: Securing Your Open-Source Supply Chain

Snyk focuses on vulnerabilities within your open-source dependencies and container base images—often the largest attack surface. It integrates directly into the build process, scanning manifest files like package.json or pom.xml and comparing dependencies against its comprehensive vulnerability database. A common CI implementation involves executing snyk test --severity-threshold=high, which will return a non-zero exit code and fail the pipeline if a high-severity vulnerability is detected.

For a deeper technical implementation guide, see our article on building a secure CI/CD pipeline.

The technical goal is to make security scans as routine as unit tests. By embedding API-driven tools like Snyk and SonarQube, you provide developers with immediate feedback within their existing workflow, dramatically reducing the mean time to remediation (MTTR) for vulnerabilities.

Centralizing Secrets Management

Hardcoding secrets (API keys, database credentials, certificates) in source code or CI/CD variables is a major security anti-pattern. HashiCorp Vault has become the industry standard for centralized secrets management. Applications authenticate to Vault using a role-based identity (e.g., a Kubernetes service account or an AWS IAM role) and dynamically fetch secrets at runtime.

This architecture decouples secrets from the application lifecycle and provides a centralized audit trail of all secret access. For advanced security posture, you can start aligning ISO 27001 Annex A and ASD Essential Eight controls within your pipeline, moving from ad-hoc best practices to a compliant, auditable security framework.

Recommended Toolchains For Common Engineering Scenarios

Evaluating individual tools is insufficient; true engineering velocity is achieved by composing them into a cohesive, interoperable stack. A valuable devops tools comparison must focus on architecting functional toolchains tailored to specific use cases.

The optimal stack architecture is highly contextual, dependent on team size, budget, operational maturity, and technical requirements.

Below are three reference architectures for distinct engineering scenarios. These are not prescriptive lists but integrated toolchains designed for synergistic effect.

The Lean Startup Stack

For early-stage companies, the primary objectives are speed, low operational overhead, and cost control. The strategy is to leverage managed services to offload infrastructure management and focus engineering resources on product development.

- CI/CD: GitHub Actions is the optimal choice. It is co-located with the source code, requires zero server maintenance, and its generous free tier is ideal for small teams.

- IaC & Deployment: For front-end applications and serverless functions, use platforms like Vercel or Netlify. They abstract away the underlying cloud primitives, combining deployment and infrastructure into a seamless GitOps workflow.

- Orchestration: Avoid it if possible. If containers are required, use a serverless container platform like AWS Fargate or Google Cloud Run. This provides the benefits of containerization without the operational burden of managing a Kubernetes cluster.

- Monitoring: Sentry for application error tracking and Google Analytics for user metrics. Both provide high-signal insights with minimal configuration overhead.

This stack is architected to minimize infrastructure headcount, enabling a small engineering team to operate at a scale that would otherwise require dedicated operations personnel.

The Enterprise Modernization Stack

Large enterprises face a different set of challenges: managing legacy systems, adhering to strict compliance regimes, and executing modernization initiatives in a hybrid-cloud environment. This stack must balance control and security with modern DevOps practices.

The core challenge for enterprise DevOps isn't just adopting new tools. It's about integrating them with existing systems of record and security protocols. This demands a toolchain that offers both deep extensibility and robust governance features.

Here’s a typical flow for baking security checks right into the pipeline.

This decision tree illustrates a shift-left security model where automated scanning and policy enforcement are embedded directly within the CI/CD pipeline, preventing vulnerabilities from reaching production environments.

- CI/CD: A self-hosted GitLab instance provides a single, auditable platform for SCM, CI/CD, and security scanning, which is critical for meeting compliance requirements like SOC 2 or ISO 27001.

- IaC: Terraform Enterprise offers the cloud-agnostic provisioning necessary for hybrid environments, along with essential governance features like policy as code via Sentinel.

- Orchestration: A self-managed Kubernetes cluster, either on-premises (e.g., with VMware Tanzu) or within a cloud VPC. Our analysis of Kubernetes cluster management tools can help inform this decision.

- Monitoring: A self-hosted stack of Prometheus for metrics collection and Grafana for visualization provides powerful, customizable observability without exporting sensitive performance data to a third-party SaaS provider.

The Cloud-Native Scale-Up Stack

This architecture is designed for companies that have achieved product-market fit and are now focused on scaling rapidly and reliably in a public cloud environment. The toolchain prioritizes performance, deep observability, and developer productivity in a microservices architecture.

- CI/CD: CircleCI is a best-in-class solution for performance-critical pipelines. Its advanced caching mechanisms and test parallelization can significantly reduce build and test times for large monorepos or complex microservices builds.

- IaC: Pulumi is an excellent choice for this scenario, as it allows engineering teams to use general-purpose programming languages (like TypeScript or Python) for IaC. This enables higher levels of abstraction, code reuse, and the ability to build internal infrastructure platforms.

- Orchestration: A managed Kubernetes service like Amazon EKS or Google GKE is the standard. This provides a scalable, resilient, and secure control plane without the operational overhead of managing the underlying Kubernetes components.

- Observability: Datadog provides a unified platform for metrics, logs, and distributed tracing. This is critical for debugging complex, emergent behaviors in a distributed microservices environment.

Accelerating Your DevOps Maturity With Expert Implementation

Completing a detailed devops tools comparison is a critical first step, but a well-designed toolchain does not guarantee successful outcomes. The primary challenge—where most DevOps initiatives stall—is bridging the gap between tool acquisition and measurable business impact.

The right tools implemented poorly, or with low team adoption, can create more operational friction than they resolve. Misconfigured pipelines, fragile integrations, or a lack of standardized workflows can completely negate the potential ROI of your investment.

Consider a platform like Kubernetes. Its power is undeniable, but without a robust architecture designed for security, scalability, and cost-efficiency from day one, it can quickly devolve into a source of significant operational complexity and financial waste.

Bridging The Gap Between Tools And Outcomes

Ultimately, the objective is not tool acquisition but the development of a mature, scalable, and cost-effective engineering practice. This requires a level of implementation expertise that extends beyond product documentation. It involves de-risking complex tool adoptions and having the senior engineering talent to execute correctly.

This is where a strategic implementation partnership becomes a powerful accelerator. At OpsMoon, we bridge this execution gap. Our model provides access to the senior engineering talent required to ensure your chosen toolchain delivers maximum technical and business impact.

The most expensive tool is the one your team can't use effectively. True DevOps maturity comes from translating a tool's potential into tangible outcomes like faster release cycles, improved system reliability, and lower operational overhead.

Your Strategic Implementation Partner

We help you avoid common implementation pitfalls and accelerate your journey to DevOps maturity. Our team ensures that complex platforms like Kubernetes and Terraform are not merely installed but are architected from the ground up for security, scalability, and cost-efficiency.

Working with OpsMoon provides more than just implementation support; it provides a partnership focused on achieving specific engineering outcomes without the cost and lead time of building a large, specialized in-house team. We provide the expert capacity to transform your toolchain vision into a high-performing operational reality.

Common Questions About DevOps Tools

Should I Go With An All-In-One DevOps Platform Or A Best-Of-Breed Toolchain?

This is the fundamental trade-off between integration simplicity and functional depth.

An all-in-one platform like GitLab offers a unified user experience and data model, which reduces vendor management complexity and integration overhead. This is advantageous for teams prioritizing a single source of truth and streamlined workflows.

Conversely, a best-of-breed approach allows you to select the most powerful tool for each specific function—for example, Jenkins for CI/CD, Terraform for IaC, and Snyk for security. This provides maximum flexibility and performance for complex requirements but places the integration burden on your team. This approach requires a higher level of in-house expertise.

What's The Biggest Mistake Teams Make When Picking DevOps Tools?

The most common error is focusing exclusively on feature lists while ignoring the tool's impact on developer workflow and operational overhead. A technically powerful tool with a steep learning curve or poor user experience can decrease team velocity and cause widespread frustration.

A rigorous evaluation must consider the Total Cost of Ownership (TCO), which includes not only licensing fees but also the engineering hours required for training, integration, and ongoing maintenance.

How Should We Handle Migrating From An Old Toolset To A New One?

A "big bang" migration is high-risk. A phased, parallel migration is the recommended approach.

Begin by identifying a single, non-critical application or service to serve as a pilot for the new toolchain. Implement the full lifecycle for this pilot, running the old and new systems in parallel for a period. This allows you to validate the new workflow's functionality and performance while enabling your team to build proficiency and confidence before migrating mission-critical systems.

Ready to bridge the gap between picking tools and actually making them work? OpsMoon provides the senior engineering capacity to de-risk tool adoption and build a mature, scalable DevOps practice. Start your journey with a free work planning session.

Leave a Reply